TOPLINE

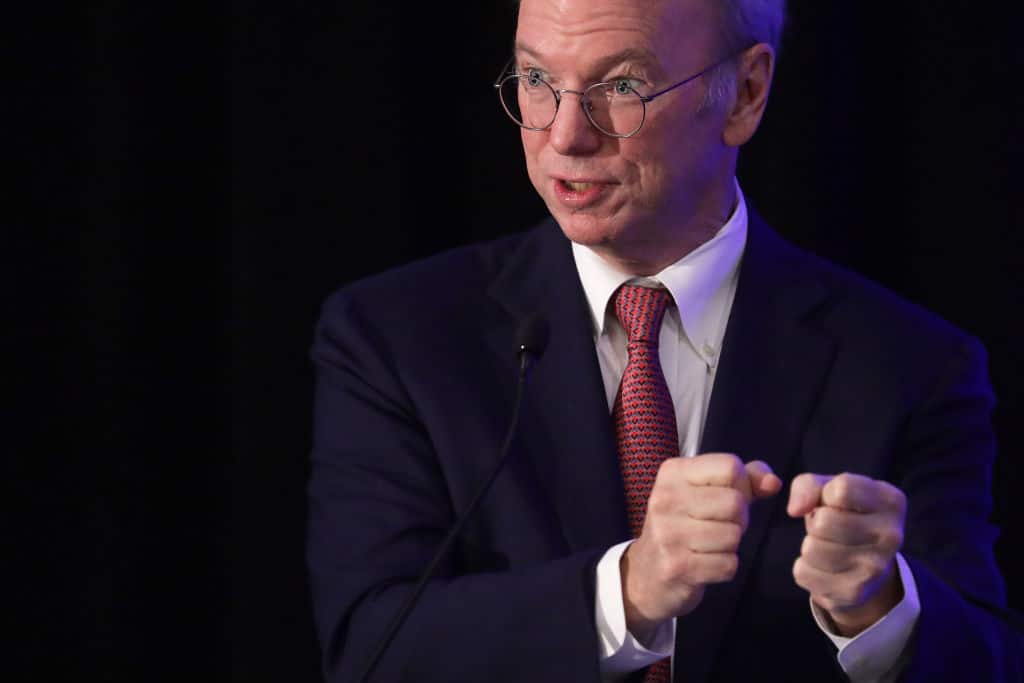

Eric Schmidt, who served as Google’s CEO from 2001 to 2011, said Wednesday that artificial intelligence could cause people to be “harmed or killed” amid possible “existential risks,” adding to concerns from other tech executives over the development of AI.

KEY FACTS

Schmidt, who spoke during the Wall Street Journal’s CEO Council Summit Wednesday, warned AI poses risks that “is defined as many, many, many, many people harmed or killed.”

Schmidt—who also served as the executive chairman of Alphabet from 2015 to 2017—suggested regulations on AI is a “broader question for society,” adding he believes it is unlikely the U.S. will establish a regulatory agency.

Sundar Pichai, CEO of Google and Alphabet, wrote in the Financial Times that “AI is the most profound technology humanity is working on today,” adding it is important to “make sure as a society we get it right.”

Twitter CEO Elon Musk and Apple cofounder Steve Wozniak signed a letter in March alongside politician Andrew Yang, Skype cofounder Jaan Tallinn, Pinterest cofounder Evan Sharper and Ripple cofounder Chris Larson, which urged AI labs to “immediately pause” work to slow down an “out-of-control race” to develop the technology.

Loading...

OpenAI CEO Sam Altman told ABC News in March that his company was “a little bit scared” over AI’s potential, adding it “will be the greatest technology humanity has yet developed.”

CRUCIAL QUOTE

Schmidt warned, “There are scenarios—not today, but reasonably soon, where these systems will be able to find zero-day exploits in cyber issues, or discover new kinds of biology,” adding, “When that happens, we want to be ready to know how to make sure these things are misused by evil people.”

CONTRA

Billionaire philanthropist Bill Gates has applauded the possible impact AI could have on society, noting he has seen “stunning” advancements in recent months. Microsoft, which Gates founded in 1975, has reportedly invested $10 billion in OpenAI. Gates acknowledged the issues raised in the letter signed by Musk and others in a blog post, though he noted any social concerns about the technology should be regulated by the government in an effort to ensure it was used for good. Gates also suggested AI could be used to improve productivity, reduce global preventable deaths among children and improve inequity in American education.

FORBES VALUATION

Schmidt, who also cofounded the venture capital firm Innovation Endeavors, is worth $20.1 billion, according to our estimates.

KEY BACKGROUND

Schmidt’s warning follows his time on the National Security Commission on Artificial Intelligence, which released a report in 2021 that indicated the U.S. government was “not today prepared for this new technology.” Schmidt also called for increasing the nation’s budget for research and development to $2 billion in 2022, and then doubling contributions until it reached $32 billion in 2026. The White House released a plan earlier this month to tackle possible risks from AI, including $140 million in funding to establish research institutes to drive responsible innovation. Calls for regulating AI have accelerated in recent months following the launch of OpenAI’s ChatGPT late last year. Other companies—including Google—have since released their own iterations of AI chatbots.

FURTHER READING

Elon Musk And Tech Leaders Call For AI ‘Pause’ Over Risks To Humanity (Forbes)

Loading...